This AI Model Integrates Feature Pyramids into Vision Transformers to Enhance Their Capability - MarkTechPost

Microsoft Improves Transformer Stability to Successfully Scale Extremely Deep Models to 1000 Layers | Synced

Make Every feature Binary: A 135B parameter sparse neural network for massively improved search relevance - Microsoft Research

Transformer : State-of-the-art Natural Language Processing | by Kushagra Mittal | Geek Culture | Medium

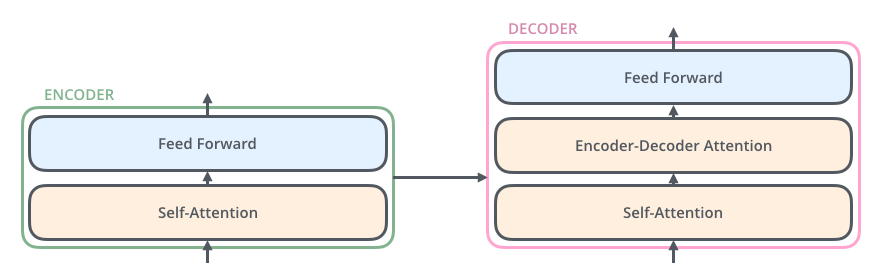

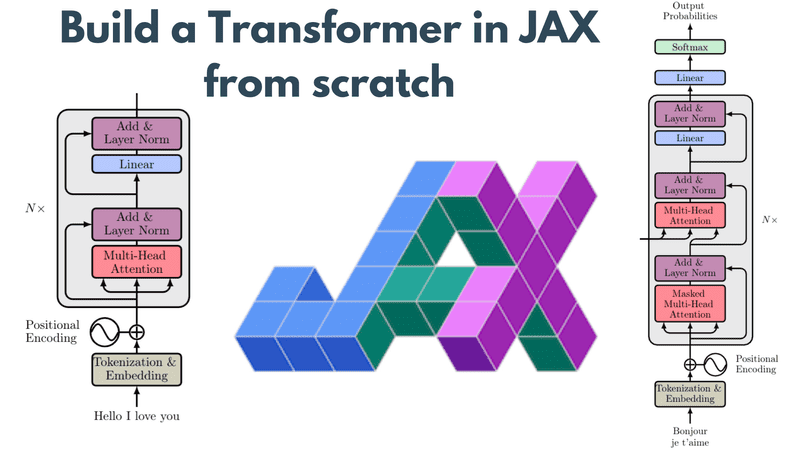

Transformer Architecture: How Transformer Models Work? | by Muhammet Nusret Özateş | CARBON CONSULTING | Medium

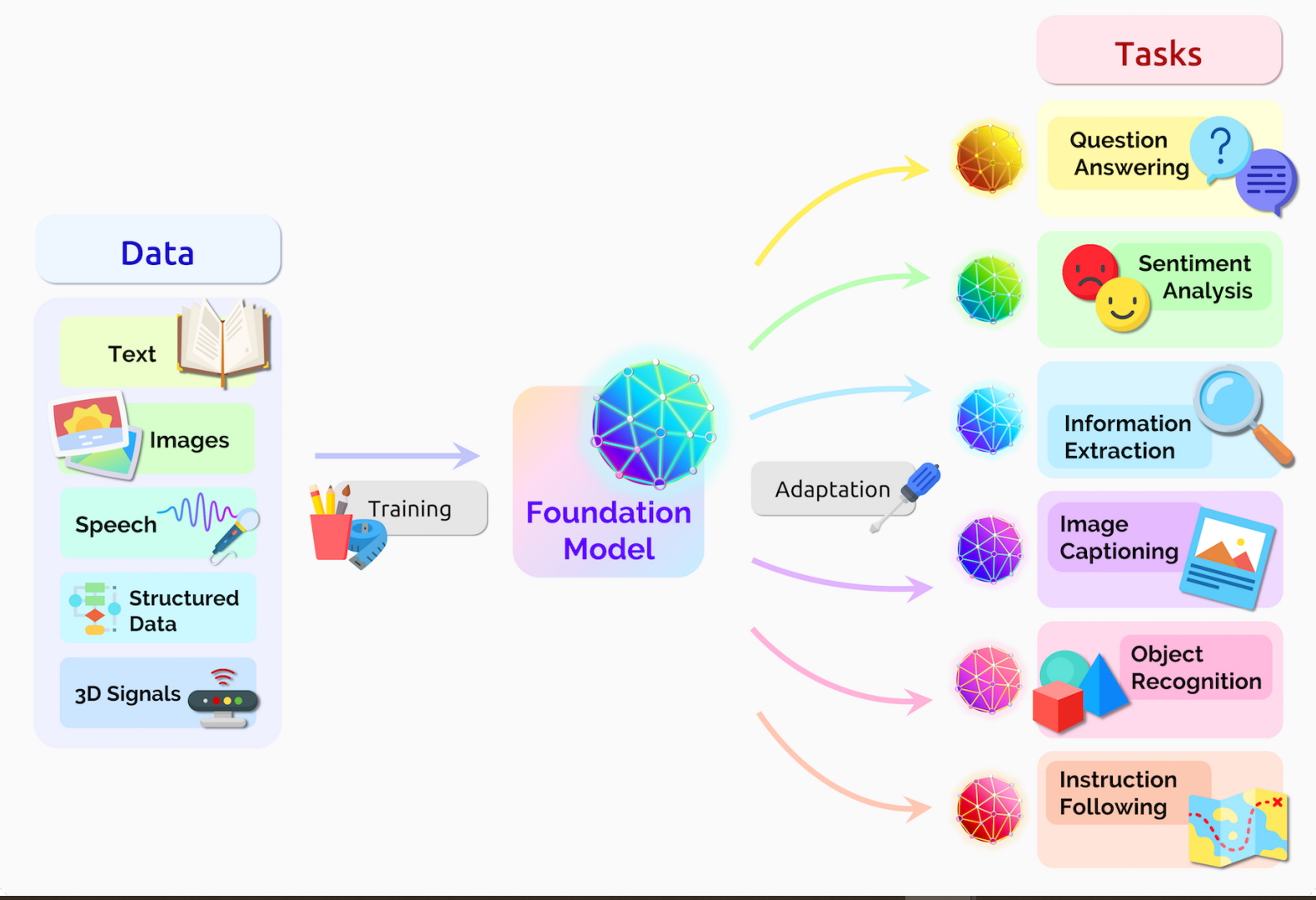

![A general framework for Transformer-based language model pre-training [8]. | Download Scientific Diagram A general framework for Transformer-based language model pre-training [8]. | Download Scientific Diagram](https://www.researchgate.net/publication/358603518/figure/fig1/AS:1123583451508737@1644894578387/A-general-framework-for-Transformer-based-language-model-pre-training-8.png)

A general framework for Transformer-based language model pre-training [8]. | Download Scientific Diagram

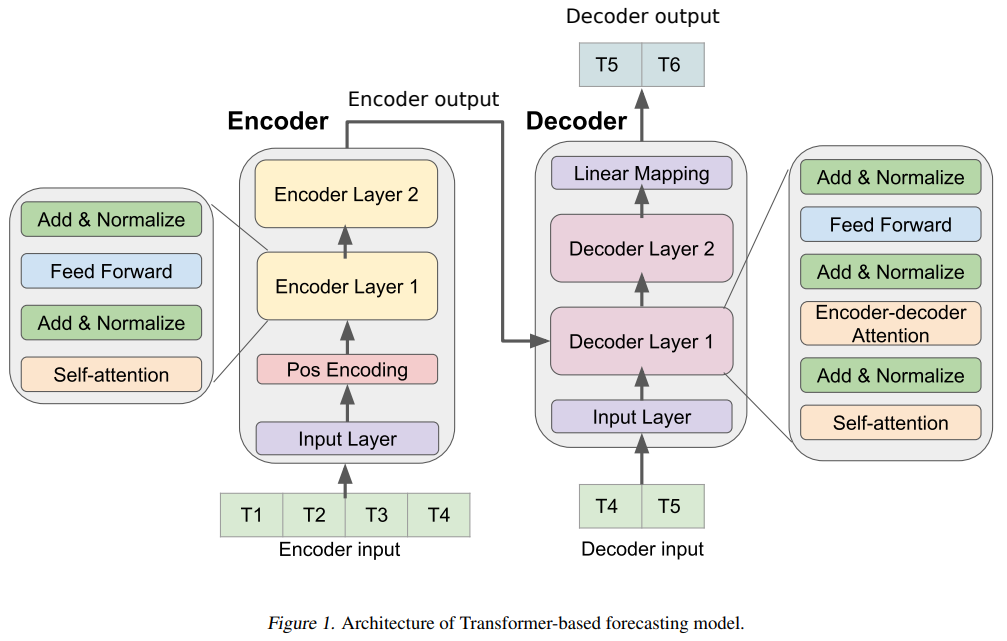

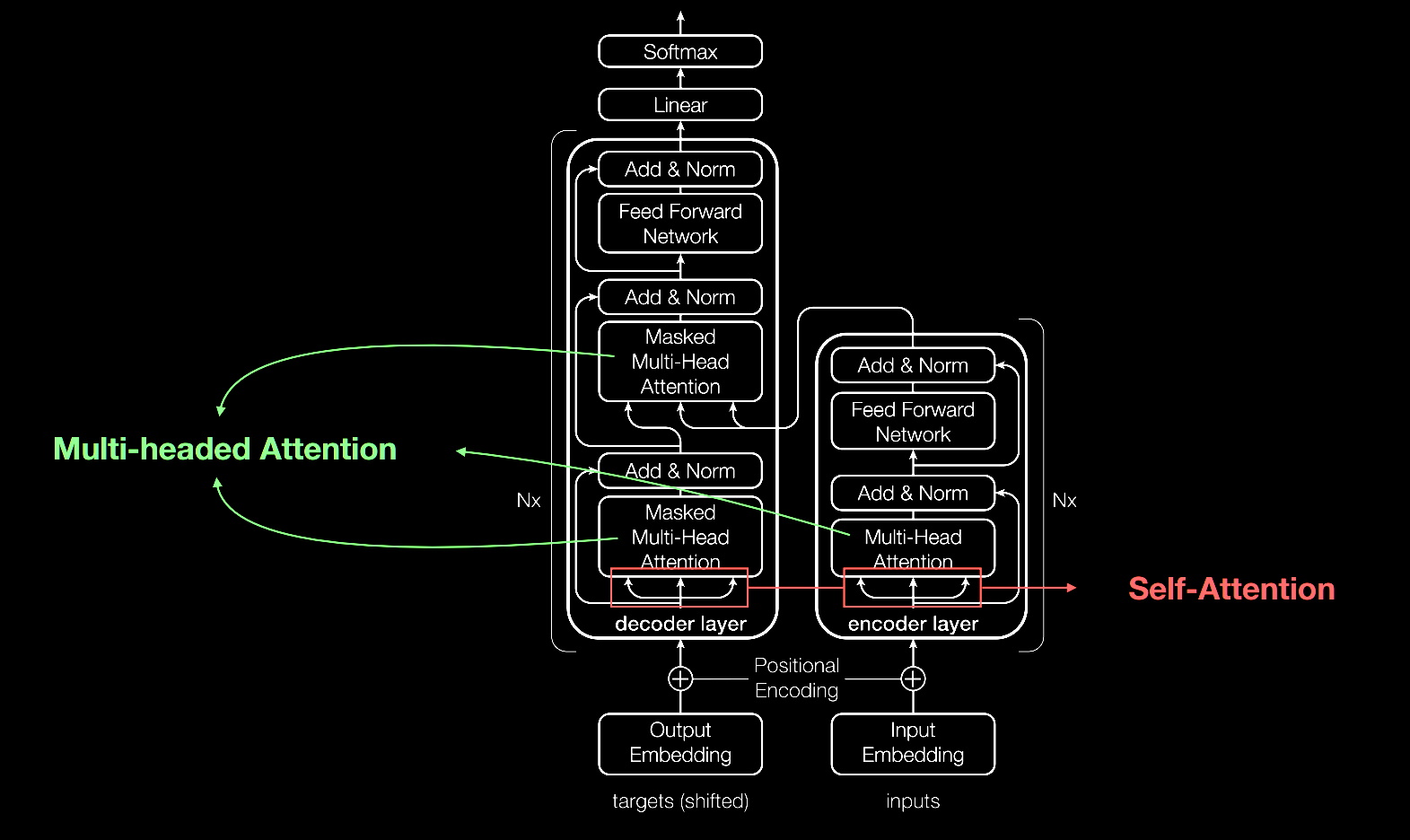

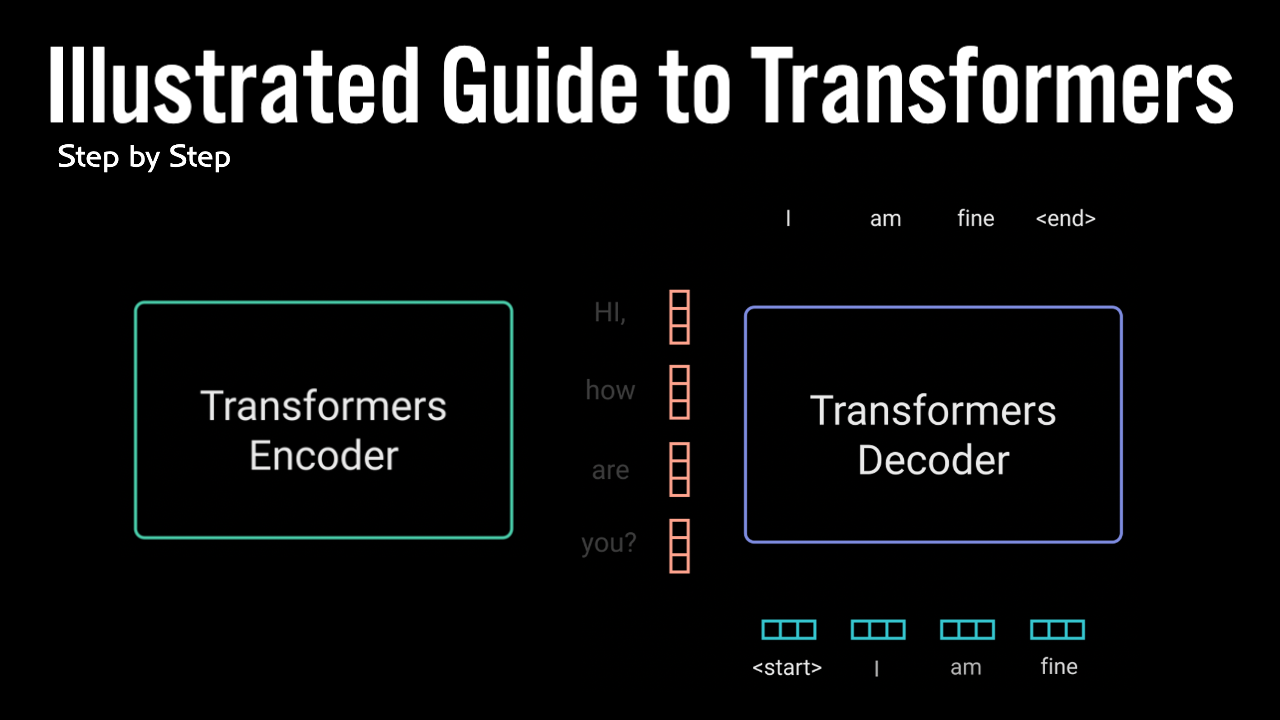

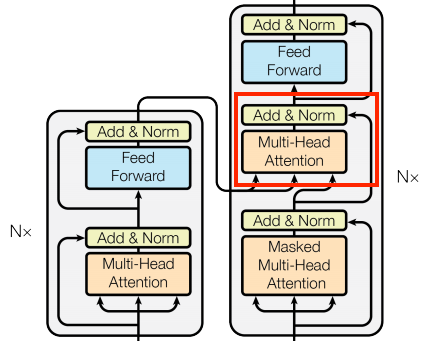

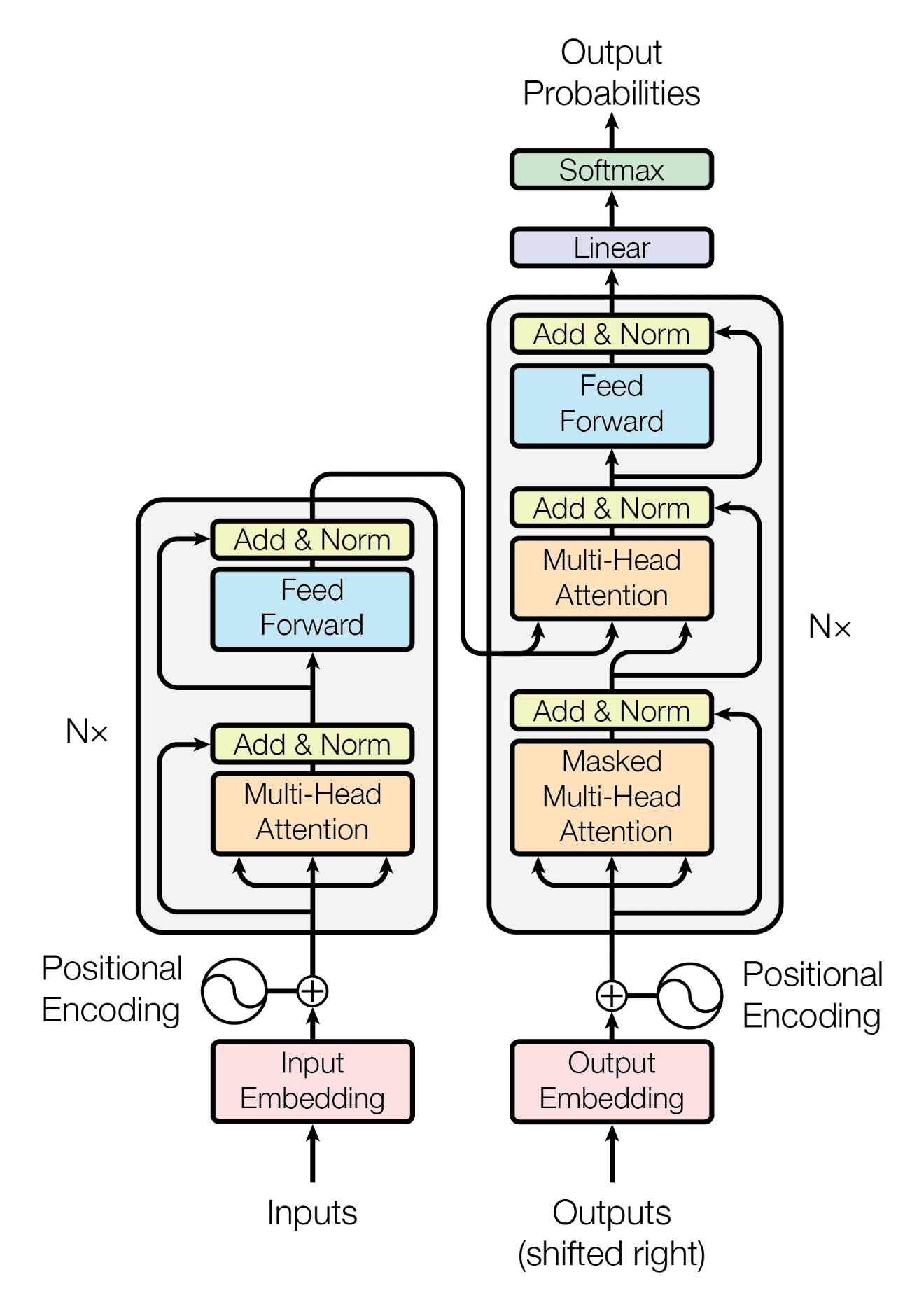

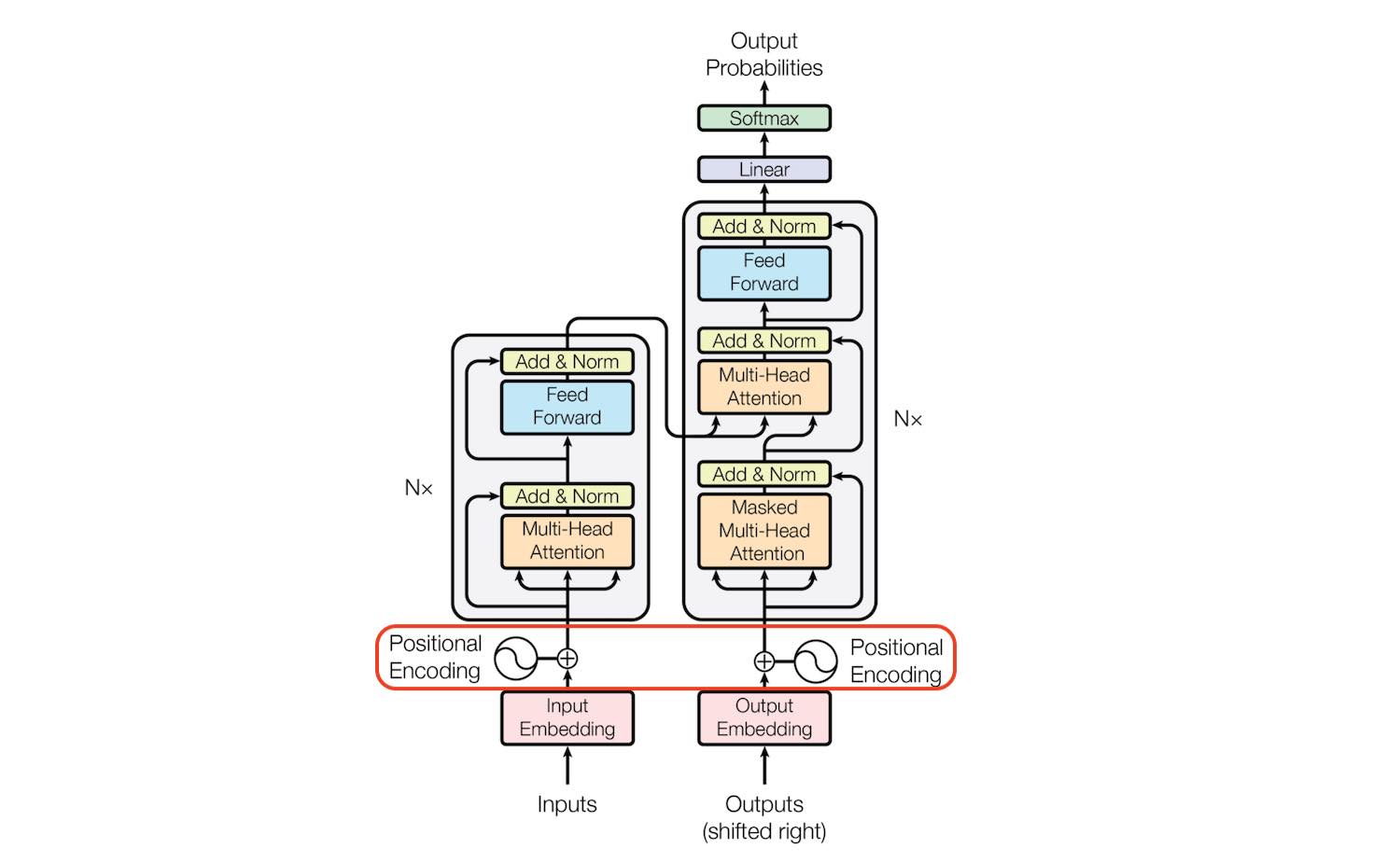

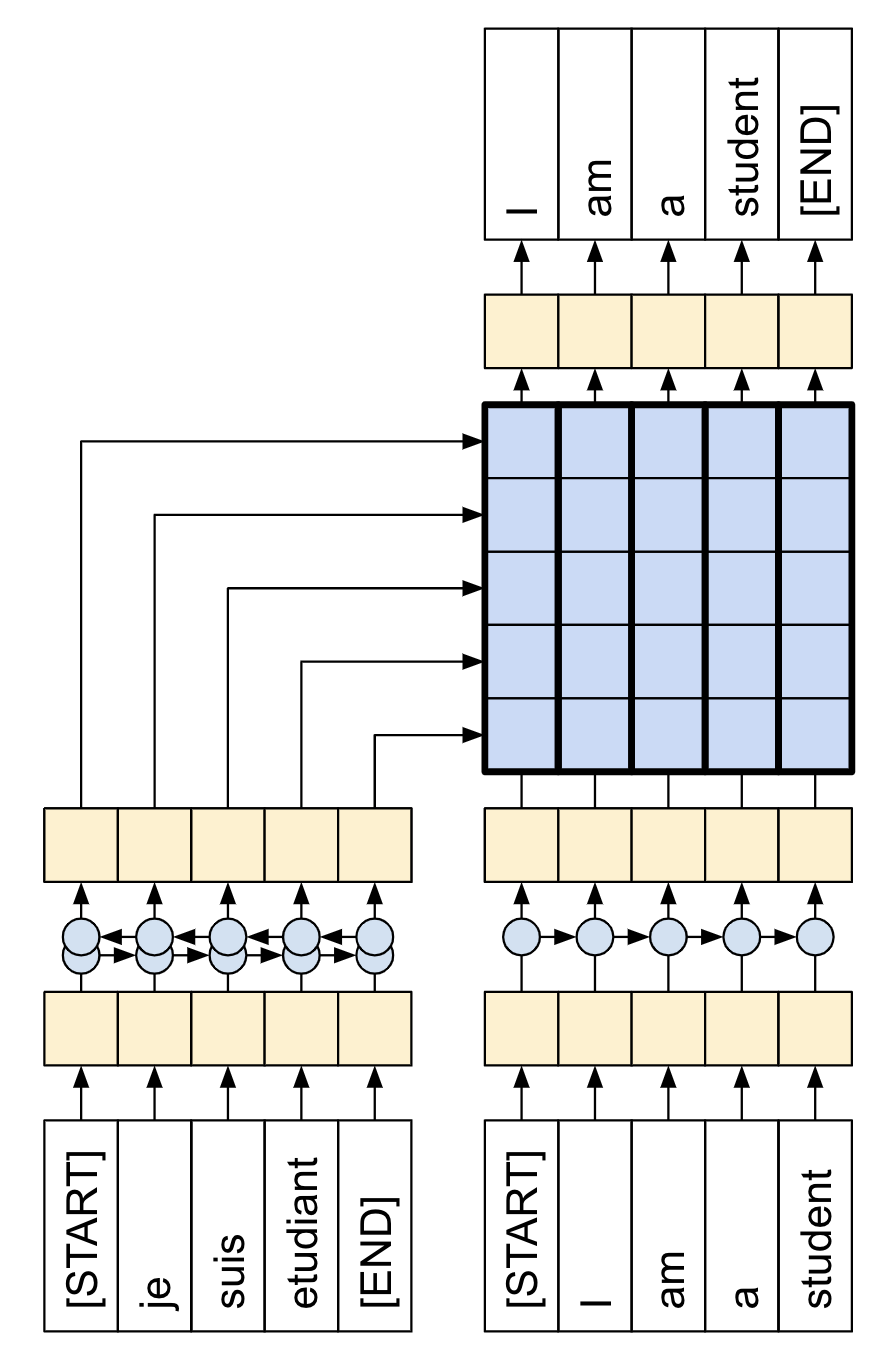

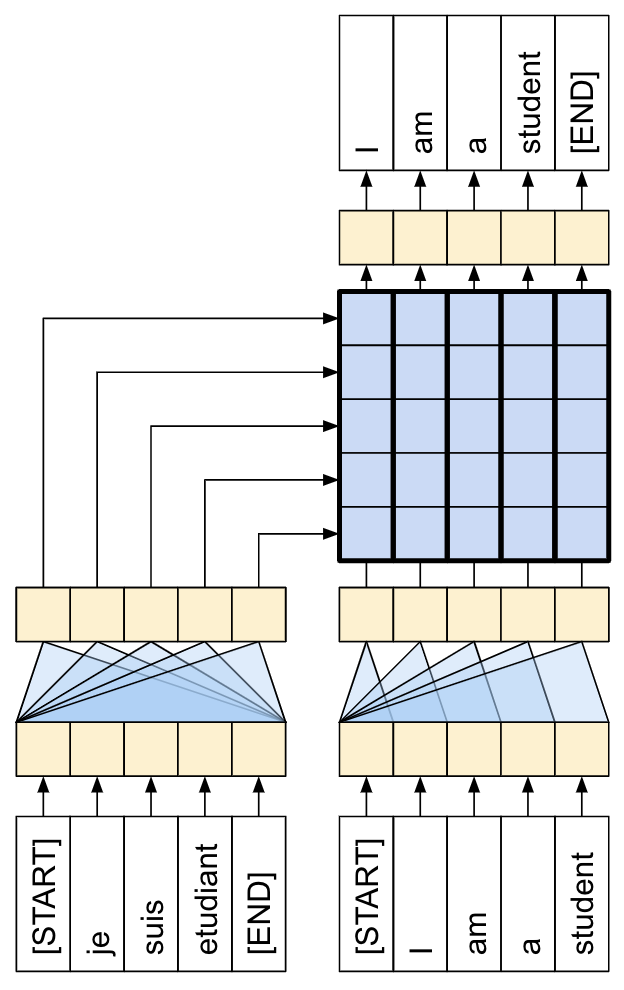

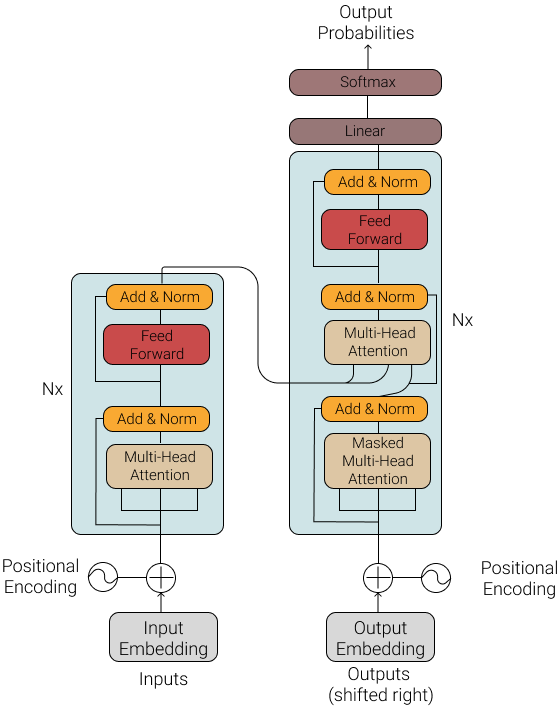

Transformer model architecture (this figure's left and right halves... | Download Scientific Diagram

Warsaw U, OpenAI and Google's Hourglass Hierarchical Transformer Model Outperforms Transformer Baselines | Synced